news The Commonwealth Bank of Australia is currently reeling with internal chaos and some service delivery problems, following what appears to be a disastrous misapplication of an operating system patch to thousands of desktop PCs and hundreds of servers last week.

According to sources, on Thursday last week a patch was issued using Microsoft’s System Center Configuration Manager (SCCM) remote deployment tool. It appears as if the patch was intended to be distributed to a number of the bank’s desktop PCs only, but it was mistakenly applied to a much wider swathe of the bank’s desktop and server fleet than was intended.

In a statement last week, the bank didn’t provide any details of what it said was “a problem with an internal software upgrade”, and played down the issue. It noted that while the vast majority of its over 1,000 branches were offering full services and its ATM, and Internet and phone banking services were unaffacted, about 95 branches were only offering limited services, such as access to automatic teller machines.

“Our branch staff in each affected branch are available as usual to assist our customers with their enquiries,” the bank said in a statement issued on Friday. “Customers may experience some increased wait times in some of our branches and when calling our call centres. Our priority is on restoring all services as quickly as possible and we apologise for the inconvenience.”

However, internally at CommBank, the situation appears to be far more dramatic than it appears to be from an external customer point of view. Late last week, sources said that some 9,000 desktop PCs, hundreds of mid-range Windows servers (sources said as high as 490) and even iPads had been rendered unusable due to software corruption issues associated with the patch.

One of the bank’s IT services partners, HP, is believed to have allocated additional resources in an emergency effort to re-image the servers and desktop PCs from scratch with the bank’s standard operating environment and other platforms where appropriate, with the bank lodging a ‘P1’ highest priority incident notice with the company. Internally, some staff at HP have been told to throw every resource possible at the situation. CommBank’s own backup and restore teams are also believed to be throwing resources at the issue wholesale.

Over the past several days Delimiter has received a number of tips from CommBank staff and the customer’s partners with respect to the issue. “I work at Commonwealth Bank Place and in the last two hours several colleagues have had SOE patches pushed out which have totally killed the machines resulting in No Operating System found messages,” one unverified tip stated.

“Execs have received emails advised that all machines at [Commonwealth Bank Place] are to be logged out and removed from the network immediately,” wrote another. “Apparently a virus has been mentioned, from what I have seen it would perhaps be in the SOE update?” And a third comment was: “Heard the one about the CBA losing 20% of their laptops this afternoon after a mysterious system update rebooted machines without notice and into a system error screen?”

One industry source with knowledge of the situation said they had never seen a situation quite like it in Australia. The problem is believed to have affected around a quarter of the bank’s desktop PC machines.

opinion/analysis

Thousands of desktop PCs down, hundreds of mid-range Windows servers offline and even iPads being rendered inoperable, with an outsourcer throwing every available resource at the situation and even some bank branches unable to serve customers? Well, I wouldn’t say it’s the biggest IT disaster Australia has ever suffered (most would say Customs’ Integrated Cargo System catastrophe was the biggest), but in terms of one-off enterprise IT outages that interrupt normal business, it doesn’t get much worse than this. I would say CommBank will still be fixing this situation for months to come.

The damage to the desktop machines is bad enough. But it’s in the damage to the mid-range Windows servers that the real pain will be felt at the bank. With email usually stored remotely and backed up pretty rigorously, and most documents these days stored on network drives, it’s normally not that huge a deal to have a desktop PC fleet suffering problems (although to see 9,000-odd machines go down in one go really beggars belief). Even if data is lost, the desktop PCs can be re-imaged and providing basic services to staff pretty quickly — ideally using the same remote deployment tools which caused this headache in the first place.

But servers are a completely different beast altogether. Server admins know that most servers are pretty customised to fit the specific need they’re serving. That configuration usually isn’t something you can easily re-image from a base install package — and meanwhile, although the desktop PCs might be back up soon, the servers providing services across CommBank will still be down, helping to further cripple its operations. I really don’t envy the task of the admins at CommBank and HP for the next few months. I wouldn’t be surprised to find out that quite a few people had their annual leave cancelled as all hands will be needed to resolve these issues.

And all, or so it would appear, from one little operating system patch issues mistakenly to the wrong list of machines. Centralised software deployment and administration is fantastic, of course, but when it goes wrong, it really goes wrong. There will be more than a few red faces and angry meetings about this one before all is said and done. Let’s just hope the bank didn’t lose too much data along with the downtime. And I look forward to the inevitable internal investigation and report allocating blame for this little issue.

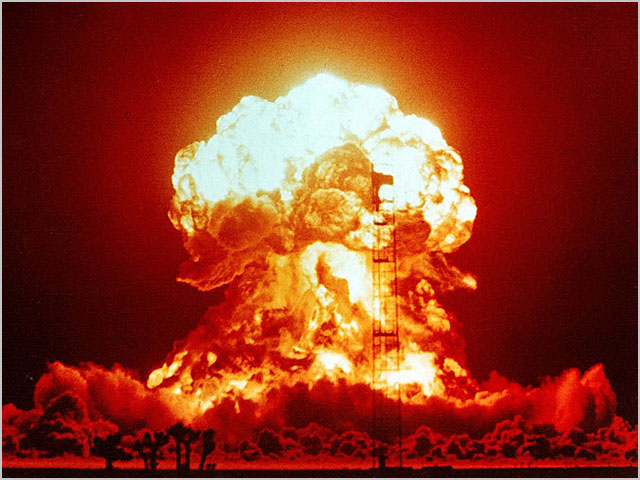

Image credit: US Government, Creative Commons

I know nothing about the circumstances of this outage, but there’s an increasing shift toward full configuration automation on servers. If they have this sort of thing in place, as well as a well-tested bare-metal restore procedure, then many of these servers can probably be re-imaged as easily as the desktop machines.

As always, it’ll be the few exceptions to the rule that occupy the most time and effort!

I’m gonna call bullshit on the iPad’s being rendered inoperable. I honestly can’t see how a Windows SOE update could take out an iPad…

yup agree on this as well.

just my little tidbit, I’ve seen a newbie use SCCM to accidentally roll out MS Project to every desktop, laptop, tablet and server in an organisation before.

Both hilarious and horrifying at the same time.

Hilarious and horrifying is the correct phrase here.

Not sure about the iPads — that’s just what I was told. It does seem a little weird.

Not questioning you mate, just questioning the technical knowledge of the CBA sources. There were a couple of comments from sources in the article about CBA dumping Lync a while back that didn’t make sense to me either either, so I’m just a little suspicious of what they have to say now ;)

No worries, question away! I like it when people question my articles – it helps make them better :) In my view, that is a large part of what the comments field is for — as long as it’s polite ;)

ipads could be useless if the patch ruined the citrix/email servers… pretty much that’s all ipads get used for in business anyway.

You do know there is a lot more you can do with an enterprise deployment of iDevices than just connect them to Exchange with active synch. It is very possible that a problem with the back end infrastructure that they are designed to work with can make these iDevices for all and intents and purposes useless.

yeah, but it’s pretty rare to find.

I’ve only ever seen the standard citrix/ActiveSync combo.

What if the ipad applications relied on an IP address, and they use windows servers to issue DHCP….

one word … microsoft … says it all :)

@ nobby6

Seriously? Something that looks a lot like ‘User Error’ is a fault with Microsoft?

SCCM has the ability to allow restrictons to be put in place so certain people can access certain things (with a hell of a lot of ganularty) – so either they weren’t restricted, or someone who should’ve known better, did something wrong.

Renai – it’s “System Centre Configuration Manager” by the way ;) Not “Service Center”

the words you are looking for are ‘user error’, not ‘microsoft.

easily able to cause chaos on linux, unix, mac os, or any other operating system if you don’t know what you are doing…

try again, troll.

user error, really, share with us all this insightful evidence of your copy of the internal post incident report? We are all waiting

You can always tell the microsoft zealots round here

^^he’s right. I think this MS product became self aware and started attacking the machines on the network. It’s only a matter of time before Redmond launches an all out nuclear war on us all.

last time i checked, sccm will only do what you tell it to do…. i am willing to bet my house that this was user error and not the fault of sccm.

if proof is your bugbear, perhaps provide your proof that sccm has done this before? and by ‘this’, i mean that sccm has enacted an update or machine-updating operation all by itself…

don’t need to be a microsoft zealot to know that you can cause trouble in any OS if you don’t have training or knowledge.

seems that hp staff admins have deployed the task to the wrong collection. seems like human error to me.

back in your hole, troll.

This insider says it was a bad setup by an HP engineer, NOT MICROSOFT! Although, HP is blaming MS for not building in a safeguard… Sigh.

http://myitforum.com/myitforumwp/2012/08/06/sccm-task-sequence-blew-up-australias-commbank/?utm_source=twitterfeed&utm_medium=twitter&utm_campaign=sccm-task-sequence-blew-up-australias-commbank

Haha. There is a safeguard. The Summary screen right before you click finish. It gives you a list of what’s about to happen and gives you one last chance to review before you go ahead to commit the changes.

Just proves yet again – you can’t fix stupid.

Come on, isn’t *someone* going to tell us which KB article the patch is related to?

Or was it one of those hotfix ones where you need to specifically request it….

As of about an hour ago my local (regional Qld) branch was still unable to accept/process cash deposits. Teller reported that “the tech just flew in from Brisbane” and they expected to be up and running soon.

BINGO – Reimaging Like a Boss!

If they have SCCM they should be able to do that remotely…

I can’t imagine that this was caused by a MS patch/hotfix. The “no operating system found” sounds like a bad MBR.

So either they have a virus, or someone released an SCCM sequence that repartitioned disks. Incorrectly. To every single desktop and server. Step away from the SCCM console…

This reminds me of a bad day 12 years ago when an upgrade to the Novell Netware client that I deployed got one key file corrupted on the deployment server, halfway through, disconnecting everyone from their mail. Only 150 people though, and it was found & fixed 1 hour.

Well, they have they tools, and no doubt Microsoft support coming out their ears. They should be able to rectify this very quickly with SCCM too…a bit of bootrec / fixmbr scripting.

Stop, Microsoft support? Please don’t say it again, I’m laughing to hard.

a co-worker tells me that commbank is predominantly NT4… can anyone confirm this?

I don’t think it was a microsoft patch. More probably they sent out a “rebuild patch” to computers but got the collection query wrong and sent it out to all computers. Ipads probably affected due to radius/certificate/e-mail or some other required infrastracture servers being down.

If it was a standard microsoft patch, we would of heard more about it.

Commbank hasn’t used NT4 for about 10 years. I used to work on their IT systems about 7 years ago, and we had just upgraded to Win2K.

“Commbank hasn’t used NT4 for about 10 years.”

I’m sure, like every major organisation, CBA has a few NT4 boxes/virtual machines squirreled away somewhere ;)

Westpac was still running Win 3.11 into the 2000’s – on 486’s no less.

As an consultant specializing in System Center, I can understand how someone (who wasn’t careful) can make this mistake. I can imagine it now… the poor guy who did this… his stomach must have sank once he realized what happened. SCCM is a beast, and once you kick off a process, it’s very hard to stop. Usually the only sure fire method is to create an airgap in the network cable. But if the clients have already received policy (which they obviously did in this case), then it’s all over.

Anyway I think the lesson for all of us in this is:

1. This is Exhibit A of why there’s testing, testing and more testing before deploying to production.

2. This is a good argument for role based access control – people who aren’t absolutely sure about what they are doing shouldn’t have the keys to the castle.

3. This is also a good example to review change control practices.

“an airgap in the network cable”

rofl

You can just imagine this poor guy. I wonder if they actually considered pulling the cable out of the wall. That would have been hilarious :)

You’re kidding right?

SCCM interface is the most un-intuitive, ugly and confusing POS you can get in this modern day.

I recognize the tool for the powerhouse of what it could do, but to someone who doesn’t use/configure it day in and out it might as well be in hieroglyphics.

So I guess the lesson for CommBank here is…

WANTED: SCCM Engineer. Must have experience.

:P

Never dealt with phone systems then i take it.

PRICELESS!

http://memegenerator.net/instance/24149650

+1 for appropriate

+1 for using reddit ;)

You know…I was just thinking about this. I don’t think it could of been a Windows update that did this. You ever tried to install a Windows 7 update on a Windows 2008 box (or vice versa)? It won’t install – it says “Not for this operating system” or words to that affect.

I’m wondering if someone advertised an OS deployment task sequence to All Systems (or another collection they weren’t suppose to), and that’s what’s wrecked everything. Food for thought.

Yup, my thoughts as well. However I have witnessed some people in the past extract the MSI’s out and re-package themselves for various reasons. If they package didn’t have the proper “Applies To” OS settings configured, could explain why they’re in a world of pain if it was advertised to the wrong collection.

R/Sysadmin post: http://www.reddit.com/r/sysadmin/comments/xdftm/disastrous_patch_cripples_commbank_australia/

[–]Pict – System Center dude

Insider information: HP Services were responsible. Most user devices have failed. Majority of servers required to rebuild the environment were in fact rebuilt themselves. Root cause seems to have been SOE task Sequence mandatory deployed to an All Systems type Collection.

Meme has been updated for the lulz…

http://memegenerator.net/instance/24202627

Technology is only part of the overall process. People and defined processes play a key part in this jigsaw and it only takes for part of this to break down for situations like this to arise. I can’t see how the finger can be pointed at the product when it was only doing what it was instructed to do. Had it not done as instructed then sure the situation would have been avoided but the product would then be criticised for not working as it should.

This is not the first time this has happened and certainly won’t be the last. It’s a wake up call to everyone using any kind of systems management product to re-evaluate what they are doing, how and who is pushing the buttons.

‘One of the bank’s IT services partners, HP, is believed to have allocated additional resources in an emergency effort to re-image the servers and desktop PCs from scratch with the bank’s standard operating environment and other platforms where appropriate, with the bank lodging a ‘P1′ highest priority incident notice with the company. Internally, some staff at HP have been told to throw every resource possible at the situation. CommBank’s own backup and restore teams are also believed to be throwing resources at the issue wholesale.’

HP were the ones at fault here! What makes things even worse is that CBA have made the decision to outsource their entire Service Desk to HP – wonder if the contracts were signed yet?

CBA outsourced their IT back in 1997 to EDS now HP!!!

Bare metal restore – LMAO! Great sales myth.

With USMT to migrate the data? lol

Oh wow. I love the way this article paints HP as the helpful company coming to the rescue – HP was entirely to blame for this screwup! I suspect commbank will be seriously considering ripping that contract up…

It would be interested to find out if it stuff up was done onshore or offshore.

Anyone with inside information?

Off shore

*Cough* NZ *Cough*

Kinda amazing,

HP wins huge contract to look after CBA then due to poor controls and vendor management an abosulute cluster fuck like this occurs

TAKE NOTE BIG 4 CIOS dont outsource critical functions like that

always keep the mission critical stuff in house only ever outsource the menial functions that no-one wanted to do anyway

Like OS deployments in SCCM.

Ah wait…

From what i hear there was a test to see if they can recover from an outage but they forgot to put in the test PC’s in the script so it went to all devices.

here is the affected items.

-Many variations of how the workstations and servers stat currently is.

-8610 devices have received/accepted the advert (workstations and servers)

-Advert is no longer available/has been disabled.

-240 Branch & Domain Controllers affected

-958 MAC Airs & Pros

-6226 DELL Desktops

-697 DELL Laptops

-92 Servers affected

Wow. Simply WOW. Thanks for that information.

I guess on the bright side… at least they know SCCM works. amiright?

And they get to properly test their recovery procedures. Testing procedures against “test” PC’s is for novices. Full respect to CBA and HP :)

I am surprised that is a small number of machines. I was expecting it to have hit a lot more given the size of the company. I guess they got lucky and the SCCM infrastructure got taken out by the Advert early.

no, most of the sccm server infrastructure, not a server, dc/apps etc, with an sccm agent installed, was still intact. it did however impact other management systems…

Happy to confirm your stats are spot on! HP have flown in hundreds of engineers from all over the world to begin the task of re-building most of the environment from the ground up. I am a former HP employee (5 weeks removed) and still have very good connections back into the company.

The sad part is that the user error originated in New Zealand.. One might question why this function was being performed across the pond? Well HP has a penny pinching policy of near shoring core IT services functions to low-cost regions. Its pretty sad that CommBank would allow staff from it’s (supposed) Tier 1 IT Services provider in a different country to bring down circa-70% of its key IT infrastructure!

… And to think this is the same IT Services provider that manages the Australian Taxation Departments core IT infrastructure services for the next 4 years. Lets hope our taxation details are safe!

but there were only 2 SME’s who had the responsiblity for the sccm infrastructure…ALL of it!

theyStuffed it

Posted 01/08/2012 at 7:54 pm

you are mostly correct. to the other postie, the “problem: did not originate in NZ, it was all onshore. one of the biggest issues with this was an sccm task sequence that was configured to “install on all platforms”, rather than configured for say x86 xp only (well the target pc’s were all x86 xp). the advert was triggered and targetted the all system container. the rest is now history…

In my company, our old deployment system’s software packages had policies which meant desktop patches and software COULD NOT be applied to servers and/or non intended devices for this very reason. It meant large deployments needed to go though extra process and checks before it would would even work. It was recently “upgraded”, against our advice, to another piece of shit which looks lovely (to management) but has nowhere near the check/controls and levels of automation we had with the old one and provides ability for anyone dicking around with it to send out any software package to eveything – in fact it configures itself to do this by default! – you have to manually exclude the stuff you don’t want it to target. We’ve already had a very expensive piece of software accidentally deployed to a large group of devices totalling around 1 Million worth of additional licencing liability. And other piece of desktop software accidentally hit all servers – Luckily for us it didn’t screw the O/S……yet!

Dear management – when your engineers try and highlight these risks to you – I suggest you listen and take it seriously. :)

Bob

Is it fixed yet?

All i can say is, wow.

The reaction time, redeployment, resources and the time that HP has taken to rebuild, reimage, redeploy is just amazing. Thinkg about it.

All those servers, from Metro to regional areas, all restored within a week. We are talking about full rebuilds here on not only workstations, but servers that take days.

If anything, HP should be proud, and CBA should think twice before shooting whoever at HP, because at the end of the day, if CBAs IT was controlled and managed by another company, could they have completed this, regardless of “well it shouldn’t of happened in the first place”.

A true test, and good save/win, and a serious eye opener to any other IT company.

UK banks had similar problems. The truth is that much of the technical work is now outsourced to India. While there maybe some competent technical types in India, the banks buy services solely on price. The Indians are just after the contract and hire employees as cheaply as they possibly can. So you get a bank, issuing service cvontracts for the absolute minimum price, but thinking it has pulled one over the contractor by getting such a low price; the Indian outsourcer realizes that there maybe problems, but so what, they have a contract and enough weasle words that they are not taking any risks (Indians are very risk adverse), and I T systems are complicated enough that the blame can be shuffled so much that no one really gets on top of what happened. Usually some low level worker gets it in the neck, and it ends there. I have worked in the I T industry for 30 plus years. After we started outsourcing to India, I saw some of the poorest quality work that I have ever witnessed. I dont doubt that Indian technical people could be decent at what they do, but they are largely untrained in the real world, just have dubious academic qualifications which they are attempting to use to secure jobs and usually visas. Cant blame them for theat, but the bank purchasing agents should be shot.

Jim,

Your comment about Indian people is not acceptable, we work hard and have enough technical knowledge thats why we rule silicon valley!! You can churn out figures , how many Indians are working in US and doing great…lot of big inventions in the area of computers come from India people.

What has happened with this Ausi bank was a task sequence sent to s wrong collection. Windows patches can not be installed where they are not needed.

That poor sccm admin made a collection with wrong query which added all workstations and servers to the target collection where the task sequence ( to format) the disk was sent.

Hi WindowsAdmin,

Hiring out a crew of highly trained Indian expats in the silicon valley isn’t a comparison – they still cost as much as non-outsourced employees! This means that to secure lower costs skilled workers are the first point of fail, and quality assurance the second. India has the sheer population to be able to supply a level of tech trained low skilled workers at lower cost.

There’s a bit of socio-cultural research been done around this particular “racism” in the corporate world – I’ll summarise below:

Whilst there are other available centers – Phillipines is an up and coming tech center for example, Indian education standards in the last years have focussed highly on tech literacy, so they’re a natural choice for the IT industry. Unfortunately, this means that you’ve got a huge pool of medium skilled workers that you can throw in mass at a task. The problem with this comes up in the business cultural differences between historical low population English business culture (Yes america, your system is English too) and the high population Asian business culture.

What do I mean by that? Take the following statement from an Australian Worker :

” Ah, that’s my fault. I wrote the code incorrectly and the system has gone down”

This sort of language isn’t present in an Asian based “Collectivist” culture. As an Indian, the prevailing culture is that you succeed, or fail, as a team – Individualist culture in a western economy, such as admission of guilt, is a powerful tool – after admitting something has gone wrong, it’s an assumption that you will be allowed to try again to fix it, and that your team is not to blame. In a team based culture, one person’s failure is the team’s failure, and as such you’ll go to great lengths to avoid pinning the blame on an individual:

“The team overlooked some key issues and we’re researching how best to resolve it” sounds like weasel words to an Australian company, and with no one to blame, there’s no one with high pressure on to fix it. To cultures that pride themselves getting ahead at the expense of others, this makes working with a differently cultured team difficult, and is part of the reason why Indian outsource suppliers get a terrible rap from these countries. It’s not always that they’re doing the task poorly, but there’s less risk for the individual to check work is perfect – you can always hide behind the team and “share” the blame.

TL; DR:

Somewhere in New Zealand, a tech worker is writing up a resume.

Interesting follow-on article:

http://myitforum.com/myitforumwp/2012/08/06/sccm-task-sequence-blew-up-australias-commbank/?utm_source=twitterfeed&utm_medium=twitter&utm_campaign=sccm-task-sequence-blew-up-australias-commbank

It wasn’t a patch folks. Here’s the scoop:

http://myitforum.com/myitforumwp/2012/08/06/sccm-task-sequence-blew-up-australias-commbank/

Folks, wouldn’t waste your time with that link, apart from the fact if you can bother to painstakingly wait for the site to load, the guys no more in-the-know than anyone else around here, with all the other rumours, he’s just another blogger with no evidence.

Nobby6… Sad to hear you say that. Might check your connection.

I’ll have more on the situation, too…and yes…at myITforum.com, we *do* know.

@rod trent,

nothing wrong with my connection mate, I had/have no problems with any other site, just yours, not that I have bothered to check again today, because, well, I think your just spamming, chest beating, or ma……g I wont use that word else Renai will get peeved, but yeah. what I read yesterday is nothing more than any of the tens of other rumours that have been flying around by this blog, other blogs or media speculation, all up no facts by anyone, therefore I have no interest.

Jim. I am totally disagree with your point.I am an Indian. “Humans Do Errors” irrespective of country,language or people.Without errors there wont be any great Inventions in this planet.Let us try to learn from this

Sorry if i commit any grammatical or spelling mistakes in my point :)

Comments are closed.